Shahdadian S, Wang X, Kang S, Carter C, Liu H Neurophotonics, 2023 Jun 5 10(2):025012. Site-specific effects of 800- and 850-nm forehead transcranial photobiomodulation on prefrontal bilateral connectivity and unilateral coupling in young adults Goussi-Denjean C, Fontanier V, Stoll FM, Procyk E The Journal of Neuroscience, 2023 Jun 7 43(23):4329-4340.

The Differential Weights of Motivational and Task Performance Measures on Medial and Lateral Frontal Neural Activity Kraus F, Tune S, Obleser J, Herrmann B The Journal of Neuroscience, 2023 Jun 7 43(23):4352-4364. Neural α Oscillations and Pupil Size Differentially Index Cognitive Demand under Competing Audiovisual Task Conditions Ponce-Alvarez A, Kringelbach ML, Deco G Communications Biology, 2023 Jun 10 6:627.

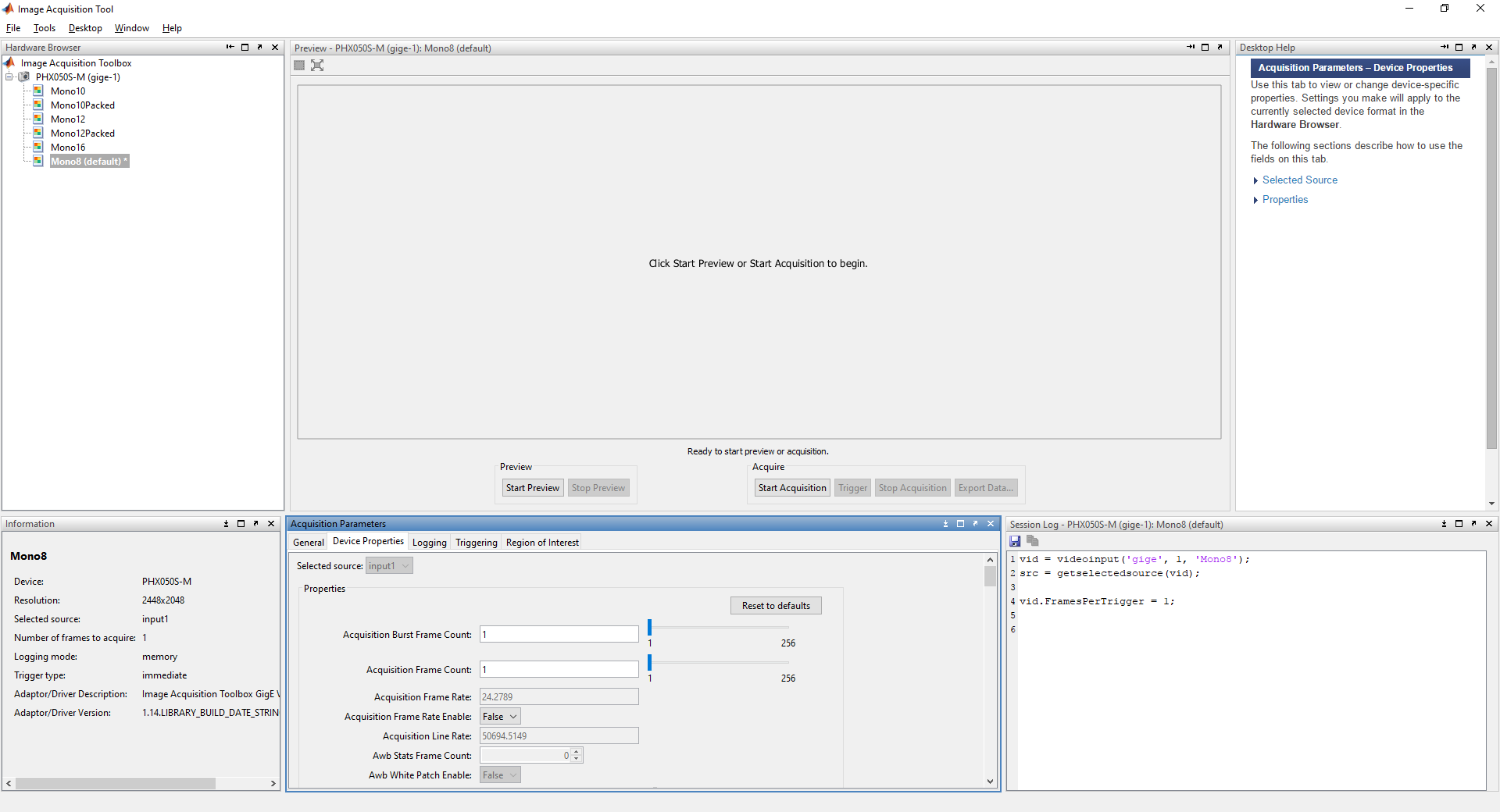

Critical scaling of whole-brain resting-state dynamics features There is a GUI make what you see is what you get. The videolabeler is released under the MIT License (refer to the LICENSE file for details). Pusil S, Zegarra-Valdivia J, Cuesta P, Laohathai C, Cebolla AM, Haueisen J, Fiedler P, Funke M, Maestú F, Cheron G Scientific Reports, 2023 Jun 11 13:9489. Providing a tool for conveniently label the objects in video, using the powerful object tracking. Effects of spaceflight on the EEG alpha power and functional connectivity HVideoIn = vision.VideoPlayer('Name', 'Final Video'. HtextinsCent = vision.TextInserter('Text', '+ X:%4d, Y:%4d'. Htextins = vision.TextInserter('Text', 'Number of Red Object: %2d'. HshapeinsRedBox = vision.ShapeInserter('BorderColor', 'Custom'. Hblob = vision.BlobAnalysis('AreaOutputPort', false.

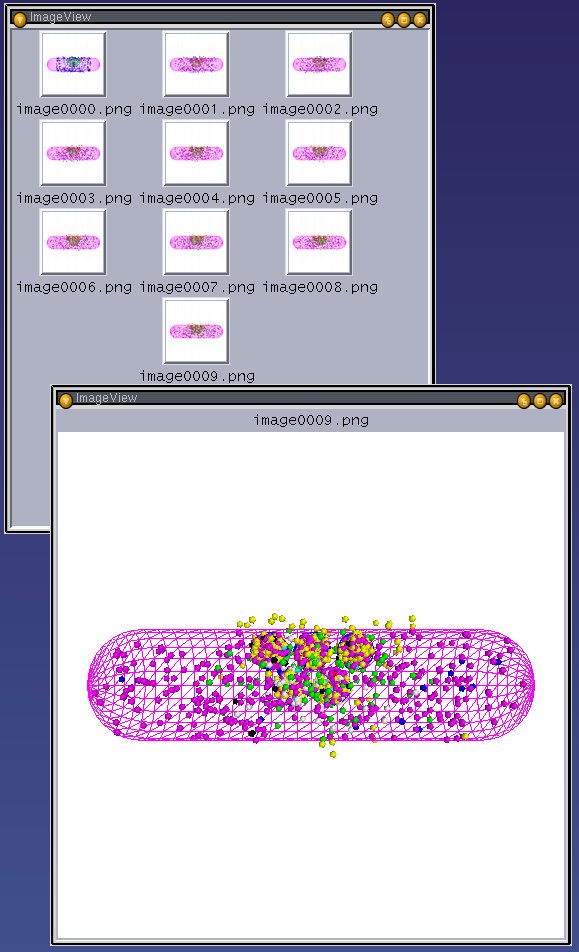

VidInfo = imaqhwinfo(vidDevice) % Acquire input video property RedThresh = 0.15 % Threshold for red detection % Description : How to detect and track red objects in Live Video % Program Name : Red Object Detection and Tracking Moreover, video processing is more largely spread in different real-time applications. Mainly, it is an effective method to implement video filters where the input and output of the system are videos streamed. The same algorithm I have introduced in my code. CONTIKI OS NS2 The practice of analysing a series of video frames is known as video processing. You can calculate the centroid, area or bounding box of those blobs. Now you can put any blob statistics analysis on this image. MATLAB Code: binFrame = im2bw(diffFrame, 0.15) Suppose in my code I have used its value as 0.15. Change its value for different light conditions. Step 6: Now convert the diffFrame into corresponding Binary Image using proper threshold value. MATLAB Code: diffFrame = medfilt2(diffFrame, ) Step 5: Filter out unwanted noises using Median Filter MATLAB Code: diffFrame = imsubtract(redFrame, grayFrame) Step 4: Subtract the grayFrame from the redFrame. MATLAB Code: grayFrame = rgb2gray(rgbFrame) Step 3: Get the grey image of the RGB frame. Step 2: Extract the Red Layer Matrix from the RGB frame. Step 1: First acquire an RGB Frame from the Video. Suppose our input video stream is handled by vidDevice object. So I am gonna use this approach to detect red color. So this approach is not versatile for all colors, but its simpler than anything and you can easily eliminate the ambient light problem using it. One more simple solution exists there if you decide to detect only red or green or blue color. in this way you can detect almost all distinguishable colors in a frame.īut this approach is a bit difficult in real life problem especially in Live video due to ambient light. So choose a range in which different shades of red exists. The popular approach is to convert the whole RGB frame into corresponding HSV (Hue-Saturation-Value) plane and extract the pixel values only for RED. To detect the red color in every single frame we need to know different approaches. Hi, Everyone, In this page, I want to show you how you can detect and track red objects in live video.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed